Tech behemoth OpenAI has touted its artificial intelligence-powered transcription tool Whisper as having near “human level robustness and accuracy.”

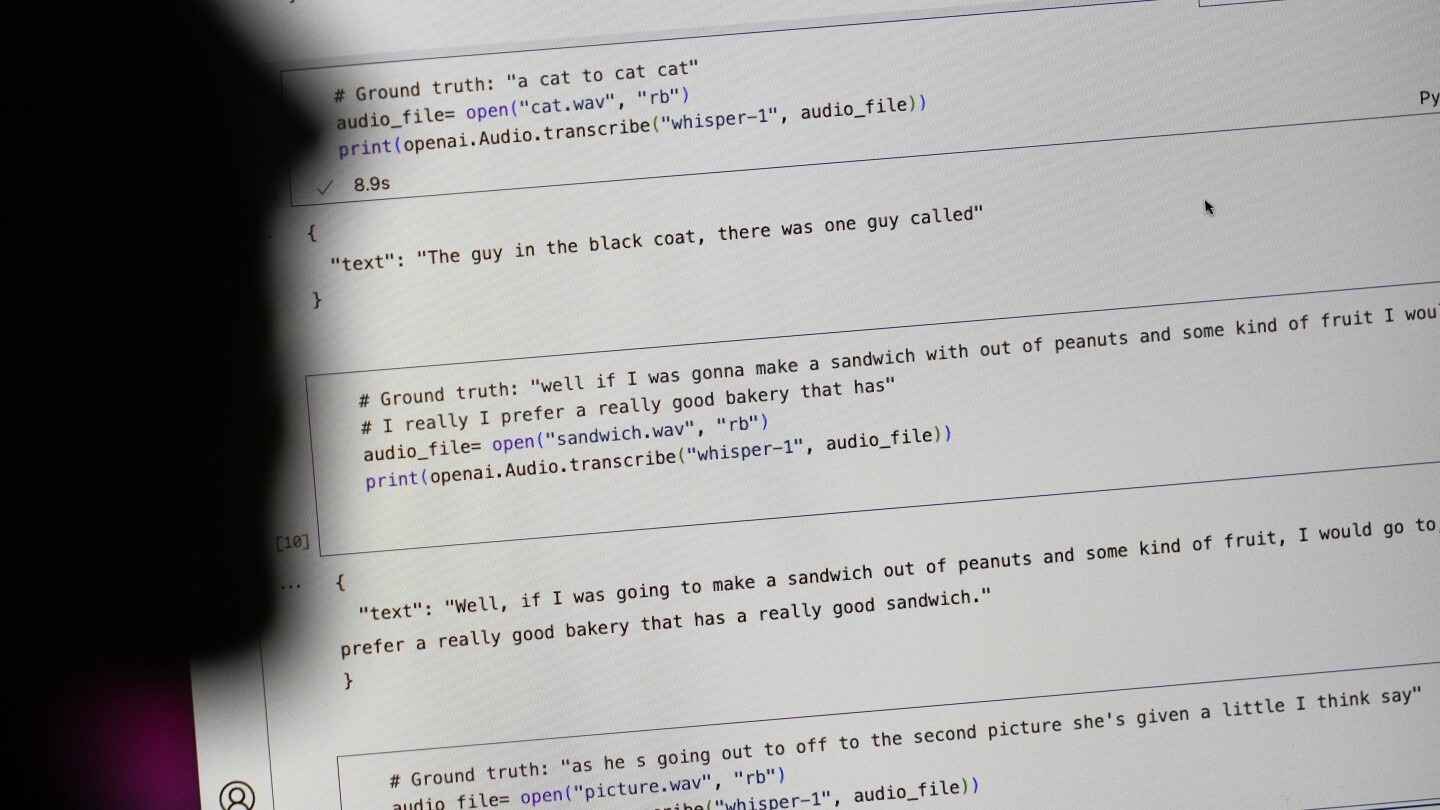

But Whisper has a major flaw: It is prone to making up chunks of text or even entire sentences, according to interviews with more than a dozen software engineers, developers and academic researchers. Those experts said some of the invented text — known in the industry as hallucinations — can include racial commentary, violent rhetoric and even imagined medical treatments.

Experts said that such fabrications are problematic because Whisper is being used in a slew of industries worldwide to translate and transcribe interviews, generate text in popular consumer technologies and create subtitles for videos.

More concerning, they said, is a rush by medical centers to utilize Whisper-based tools to transcribe patients’ consultations with doctors, despite OpenAI’ s warnings that the tool should not be used in “high-risk domains.”

The toaster oven I just invented works much better than a traditional one. It reheats French fries perfectly, you can dehydrate in it, makes succulent roasted chicken, and about 2.5% of the time it burns down your house. You’ll always need to keep an eye on it to make sure that doesn’t happen. Remember though, much better than a traditional one.

Can I try the chicken before I make a decision?

You need an editor for traditional transcription tools too :) and it’s A LOT more work. They don’t even do punctuation or names.